AI agent activity grew 300x in 2025 as employees started building and connecting personal agents to their calendars, cloud drives, and other enterprise applications to gain an edge and boost productivity. Businesses are encouraging this experimentation, favoring speed over rigorous security review.

With each new integration, agents are granted access to data and can be given read/write privileges without any oversight. The result is a sprawling network of invisible agents that no one owns or monitors. Across our customer network, we’ve observed:

Security remains accountable for these ungoverned agents, even as they operate outside every traditional visibility and control layer built for humans. Your SIEM, CASB, EDR, SASE, and Zero Trust tools can't capture the true blast radius of a single compromised agent. And the newer AI security platforms lack the application-layer context needed to detect how each agent’s permissions can stack into toxic risk combinations.

For security teams to move from reacting to business-deployed agents to becoming the business’ AI leaders, you must be able to identify and prevent these hidden risks in real-time.

When users deploy agents without security's guidance, access is granted too broadly, least privilege is ignored, and those configurations are never revisited. No single decision looks wrong on its own, but they accumulate, creating risky data exposure pathways that none of your existing tools are able to surface.

The real security blindspot isn’t the existence of agents themselves, but the combination of capabilities that are individually acceptable, but collectively catastrophic. The five scenarios below show exactly how these combinations play out undetected in enterprise environments.

Knowing how to spot and stop each one before they cause an incident will be vital for any security team wanting to scale AI agent adoption across the business.

A RevOps manager builds an Agentforce agent to automate pipeline reporting. During setup, she grants it broad read access across the entire CRM (accounts, contacts, opportunities, and compensation data) to avoid running into any permission errors. She intends to tighten the scope later, but forgets.

The agent performs well and gets shared org-wide to streamline QBR reporting for sales reps. Now every account executive, SDR, and analyst is querying an agent that can see far more than any individual user is authorized to view—including executive compensation plans and confidential M&A records.

Once this happens, every access control you had was bypassed without a single alert firing. That’s because the agent was authorized to access those records. It simply became the highest common denominator for everyone it touched to now see them as well.

A marketing analyst builds a custom GPT connected to Google Drive, SharePoint, and HubSpot to pull campaign data and generate reports. He embeds his own credentials, grants broad read permissions across shared drives, and sets visibility to "anyone with the link" for easy collaboration with external agency partners.

The agent works well. The link gets shared widely, then forwarded beyond its intended audience. Now there is a pipeline available for anyone to move documents, contracts, and customer data, including people entirely outside the organization.

The agent’s traffic looks normal since it is doing exactly what it was built to do. But at the speeds AI agents operate, it only takes a few seconds for a mass data exfiltration event to occur.

An engineering team deploys an AI-powered development workflow: a planning agent reads Jira tickets, passes requirements to a coding agent which pushes those requests to GitHub, which triggers a deployment agent that publishes to staging. Each handoff is automated and each agent trusts the output of the previous one implicitly.

A threat actor injects malicious instructions into a Jira ticket description. The planning agent reads it. The coding agent acts on it. The deployment agent ships it. No human reviewed any step and each individual agent behaved correctly. The chain, as a whole, orchestrated the attack without any sophisticated social engineering required.

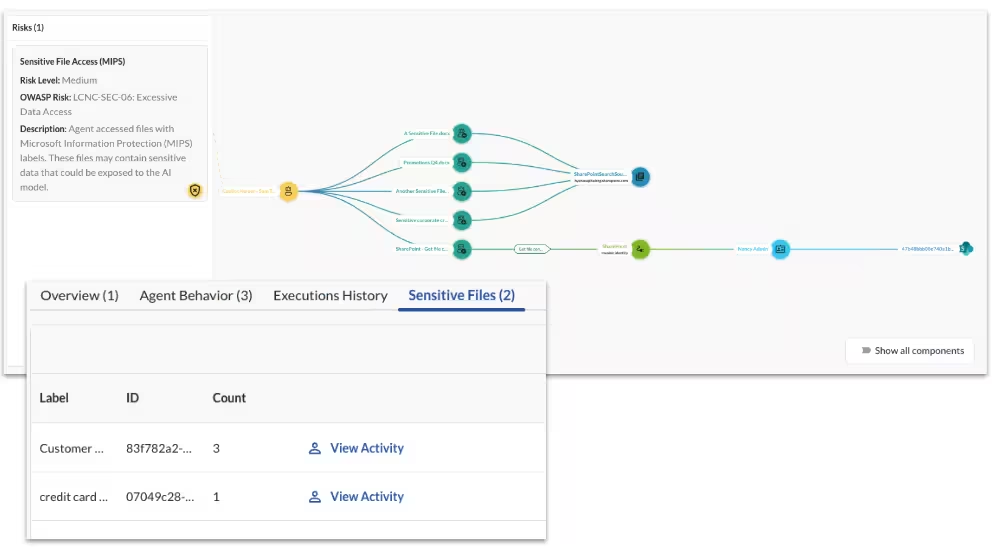

A healthcare operations team deploys a Copilot agent to streamline patient coordination, pulling records from their EHR system, summarizing notes, and routing tasks across teams. It's a genuine productivity win.

Six months later, a compliance audit asks about the agent. The problem wasn't that they couldn’t see that the agent existed. It was that when an agent took an action, nobody could trace it back to the original authorization, the data it touched, or the human who initiated the workflow. In a regulated environment, that gap is unacceptable.

A senior engineer builds an internal agent on Microsoft Foundry to automate code review and repository management, connected to Azure DevOps via his personal OAuth token with admin-level access. It runs reliably. He's the only one who knows how it works.

He leaves the company. HR offboards him, deactivates his SSO account, and wipes his laptop. The agent keeps running. The OAuth token was never tied to his SSO lifecycle, so it was never revoked. It remains valid.

Because the token was valid when issued, nothing flags it as compromised. It won't appear on any access review. It has no owner to ping during an audit. It is, for all practical purposes, a ghost with admin keys to your production repositories, and no one knows it's there.

Every scenario above shares a common thread: the risk wasn’t created by a single bad decision, but by the absence of a system that can see how all the pieces connect.

Today, there is no authoritative system of record for what agents exist, who owns them, or what they’re doing. Security teams are left chasing exposure after the fact because tools built for human behavior were never designed to correlate agent activity, application permissions, and user identities in a single view. That unified context is what’s required to spot a toxic combination before it becomes a breach.

That's the gap Obsidian Security closes. Not by controlling how third-party agents are built, but by governing how they operate once they're live in your environment.

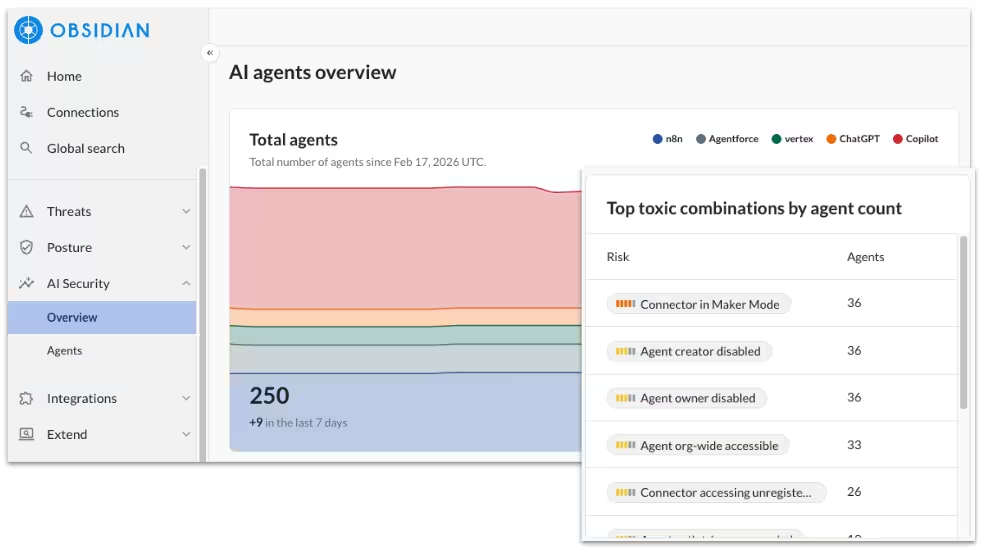

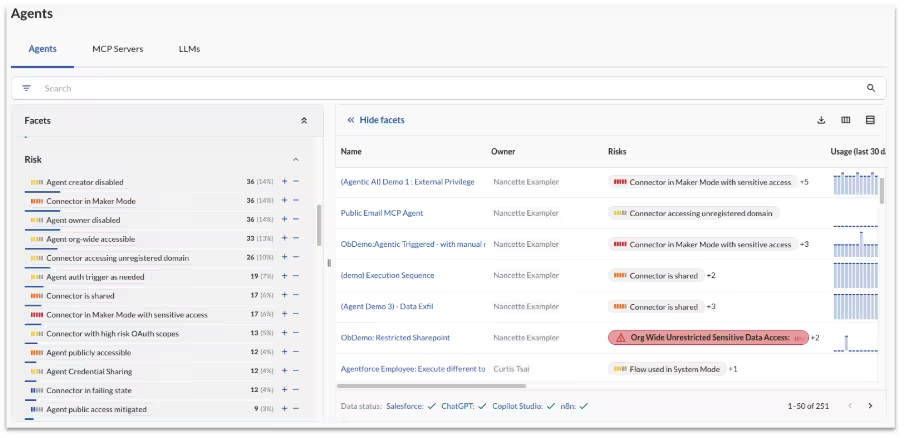

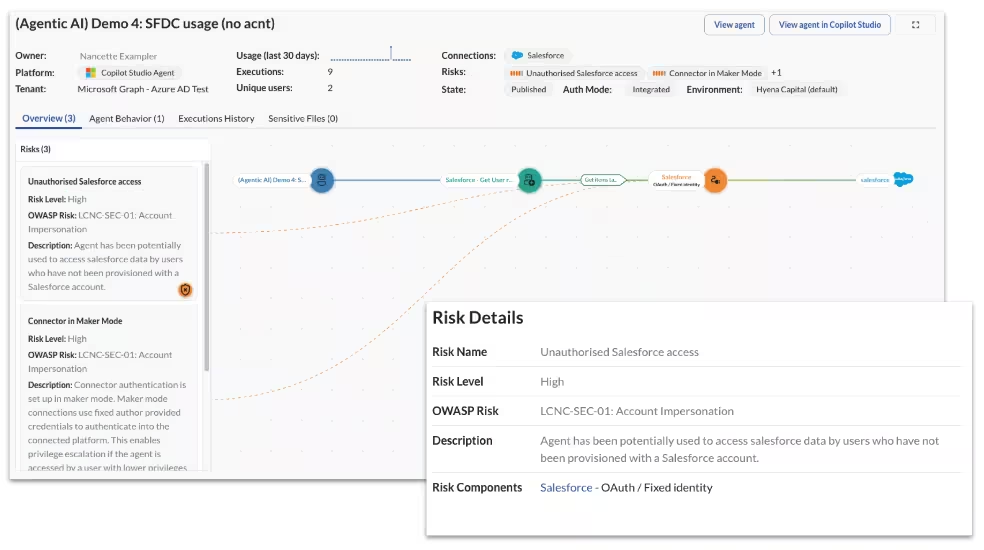

Obsidian automatically surfaces every AI agent operating across your environment through API connections into the most popular AI platforms, paired with real-time browser detections. But knowing an agent exists isn't enough.

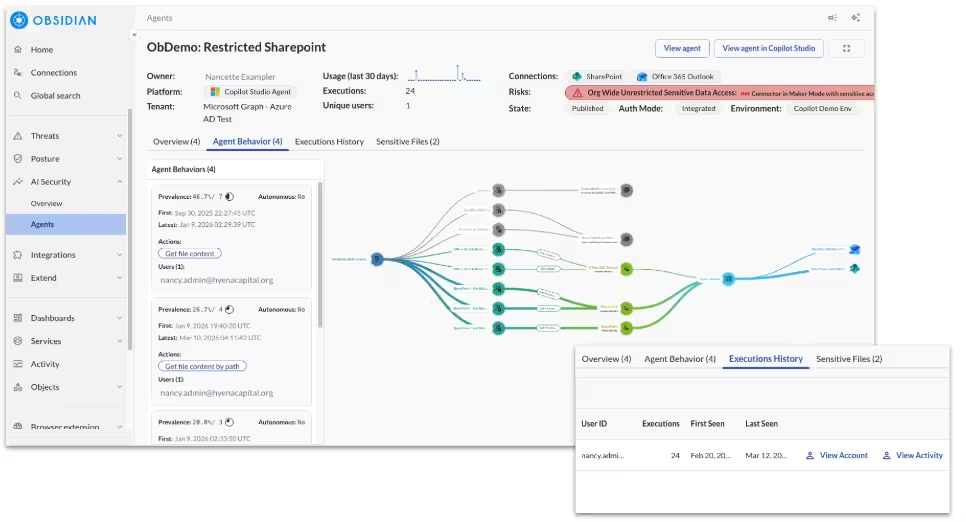

Obsidian's Knowledge Graph maps every agent to its owner, its connected applications, the permissions it holds, and the data it's actually touching. Correlating risk across identity, entitlements, and behavior in a single view is what transforms hidden exposure into something security teams can actually see and act on.

Obsidian continuously compares what an agent is entitled to do against what it actually does. For over-permissioned org-wide deployments, ghost agents running on stale credentials, or those that quietly accumulated privileges over time, security teams get a clear signal of where access has drifted beyond what's needed.

That means fewer blanket permissions left in place because no one got around to reviewing them, and the confidence to right-size access without having to guess what's safe to remove.

When an agent is modified and now able to move sensitive data at high volumes, or new permissions are added that creates a toxic combination, Obsidian fires alerts in real time.

Security teams don't have to wait for manual reviews or a user report to discover these changes. The signal arrives while there's still time to act, with enough context to understand what the agent is doing, what data it can touch, and what needs to be contained.

For organizations operating under HIPAA, SOC 2, GDPR, or other compliance frameworks, the absence of an audit trail isn't just an operational gap, it's a liability in itself. Obsidian maintains a complete, immutable record of every agent action: what was accessed, when, and under whose identity.

When the auditor asks what agents can access sensitive data, the answer exists. When a regulator questions whether access stayed within authorized scope, you can prove it did.

Agents are being built and deployed faster than any security team can manually review. Without a scalable governance layer, the only options are to slow adoption down or accept the risk, neither of which is an acceptable answer.

Obsidian's unified control plane scales with the pace of the business, giving security teams the ability to discover, assess, and govern agents as quickly as they're created. Not as a bottleneck, but as the infrastructure that makes confident AI adoption possible.

Blocking AI is not the answer. But adoption without guardrails turns every new agent into an ungoverned liability. The mandate is to move fast and stay in control, and that requires visibility beyond just the agents themselves.

The teams that get this right won't just avoid incidents. They'll be the reason their organizations can move fast with AI.

Start in minutes and secure your critical SaaS applications with continuous monitoring and data-driven insights.