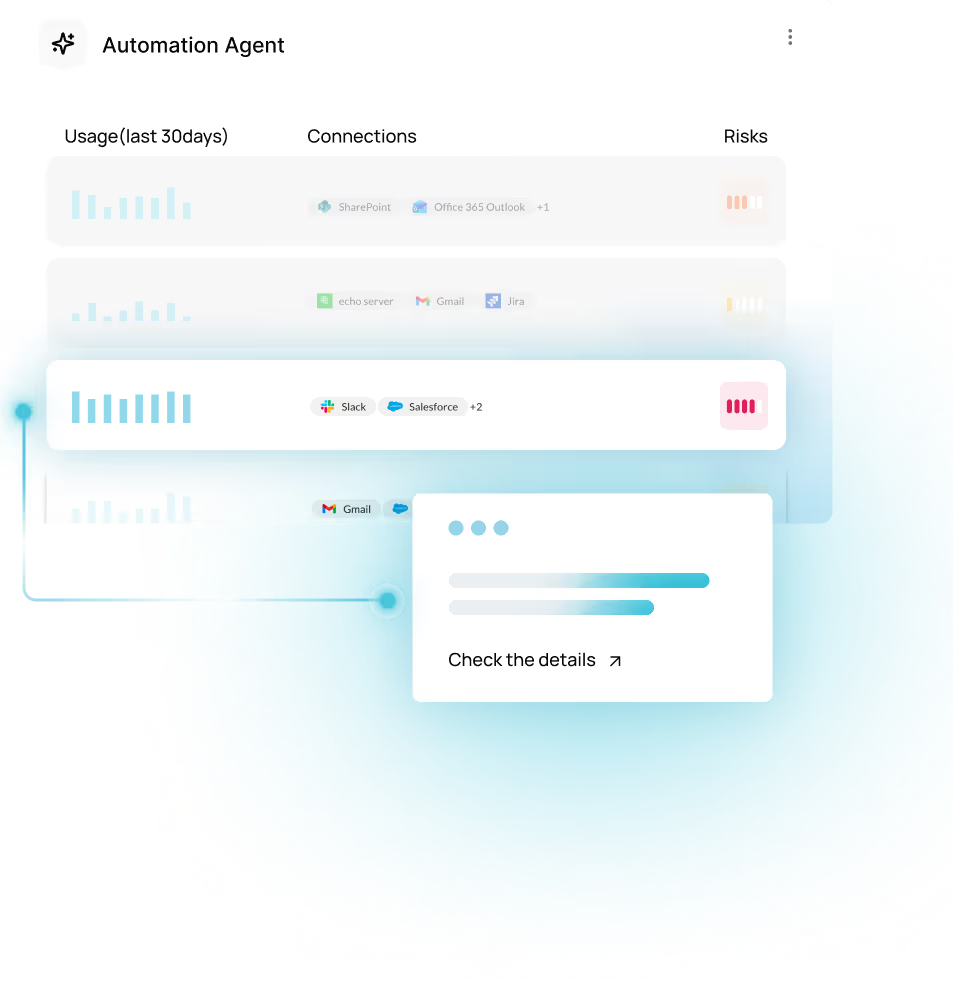

Get the complete picture of tools invoked, inherited permissions, and data moved across every agent in your environment. And the controls to act on it at runtime.

Human workflows are becoming automated ones. Risk is now continuous, carried out by non-human identities acting across apps at machine speed. The question is no longer who logged in. It is what changed, what moved and what caused it. Traditional tools weren’t built for this.

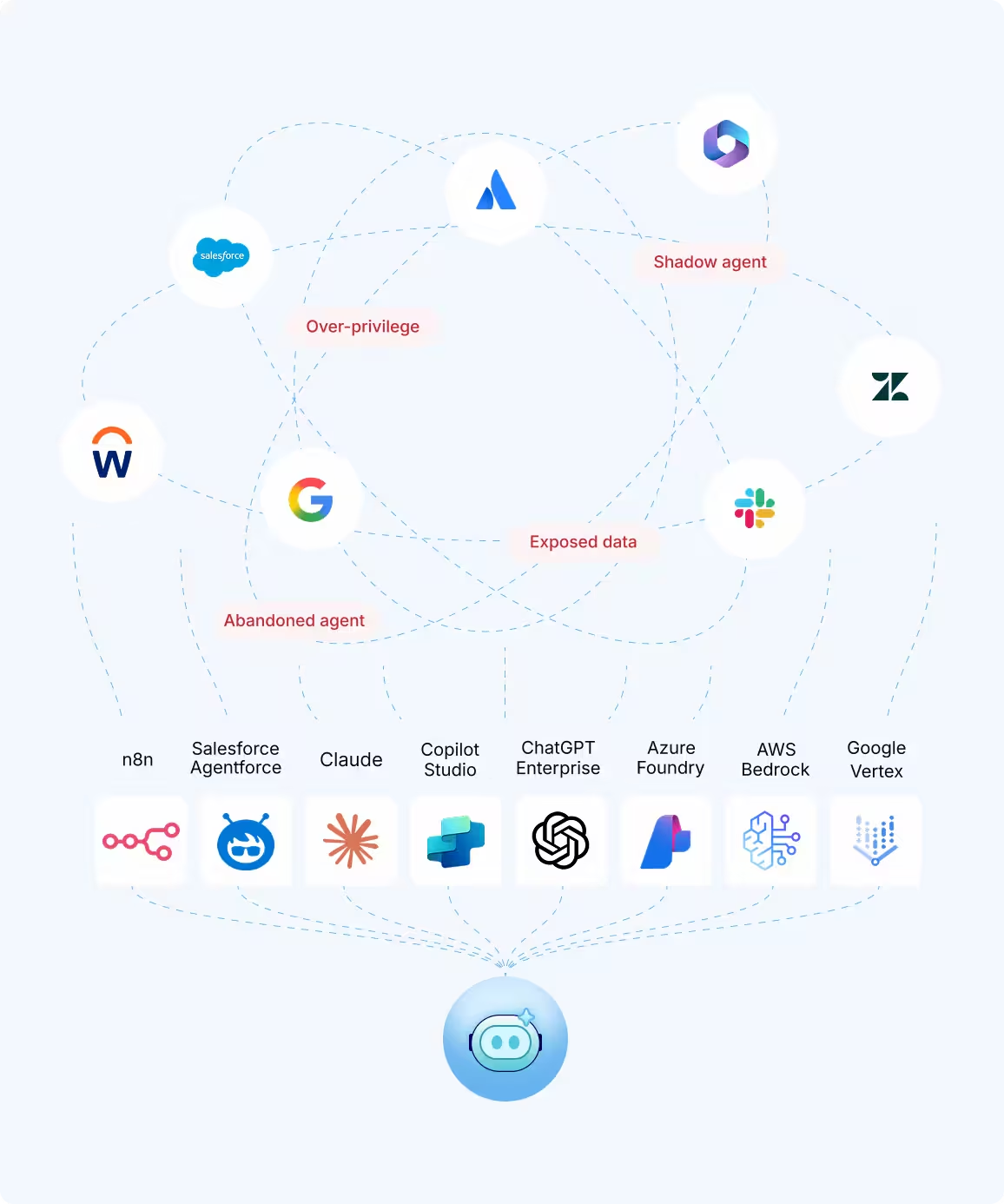

Most risks don't come from a single misconfiguration. They come from combinations – permissions and access patterns that look fine on its own, until they converge.

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

Discover all your AI agents from day one. Understand what they can actually do. Enforce guardrails and policies at runtime before impact occurs. Enable safe AI adoption without the guesswork.

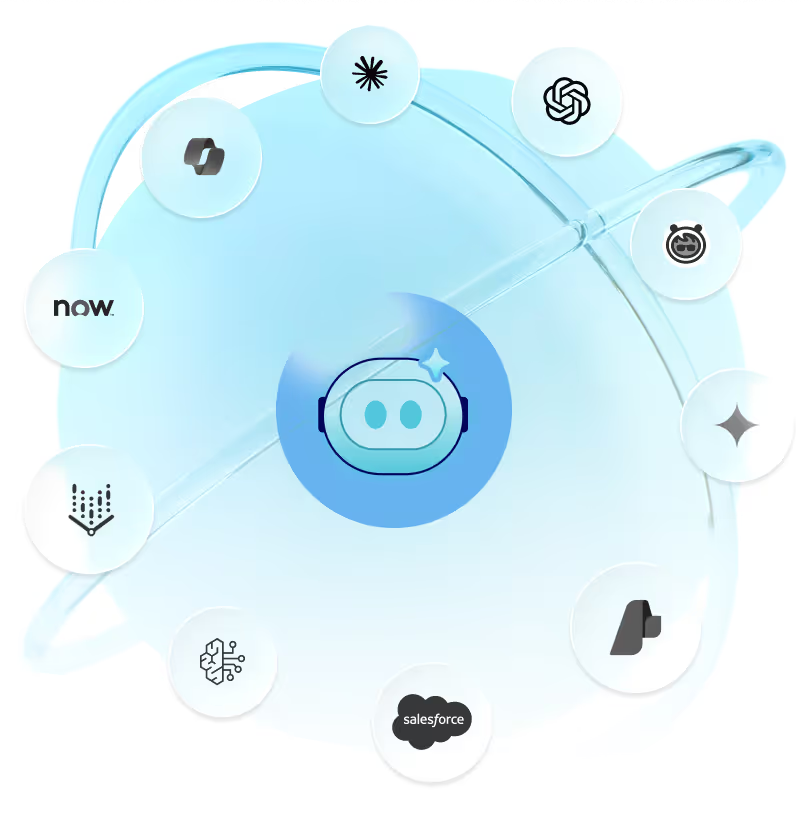

Every team builds with a different tool. Each platform has its own permissions model, its own integrations, and its own blind spots. Obsidian inventories and enforces governance across SaaS, cloud, endpoints and code platforms, so coverage never depends on which tool someone chose to build with.

Obsidian delivers immediate visibility from the moment you connect. The picture gets sharper as you add more.

AI agent risk spans roles. Obsidian gives each one the visibility needed, so innovation doesn’t slow down to a halt.

Obsidian inventories agents across platforms and shows the tools they invoke, the permissions they inherit, and the data they move. It also maps ownership, lineage, MCP servers, and LLM usage, including model changes.

Yes. Agents often don't appear in SSO, Active Directory, or human activity monitoring tools. Obsidian discovers both sanctioned and shadow AI from day one, regardless of where they were built or how they authenticate.

Obsidian flags orphaned agents with persistent access, public URLs that expose agents, unapproved external services, unsanctioned MCP connections, silent model swaps, and shared credentials with broad OAuth scopes. Risk is scored based on toxic combinations, not just individual misconfigurations.

Both. Obsidian enforces guardrails at runtime, including blocking privilege escalation, excessive data access, and policy violations at the moment of execution, not after the fact.

With agent platforms alone, you get full visibility into your agent footprint. Adding enterprise applications lets Obsidian govern what agents can actually execute, map multi-hop access across apps, trace blast radius, and apply fine-grained runtime enforcement at the application level.

Yes. Coverage spans SaaS, cloud, endpoints, and code platforms. Governance doesn't depend on which tool a team chose to build with.

Obsidian continuously maps every agent, permission, and action at runtime, giving security teams a single source of truth. Approvals become evidence-based and faster, and teams have ready answers when incidents or audits arise.

CISOs, AI Security Leads, and App Security Leads. Each role gets live inventory, permission data, runtime risk, and over-permissioned agent alerts so they can govern AI without slowing adoption.