Discover every tool, govern every agent, secure every prompt so your teams can move fast without moving blind.

.avif)

Obsidian gives you the visibility, controls, and evidence to make AI safe to go faster instead of blocking it.

Your team shouldn't need six tools and a spreadsheet to govern AI. Obsidian covers every surface: tools, agents, prompts, permissions, and MCPs so nothing falls through the cracks.

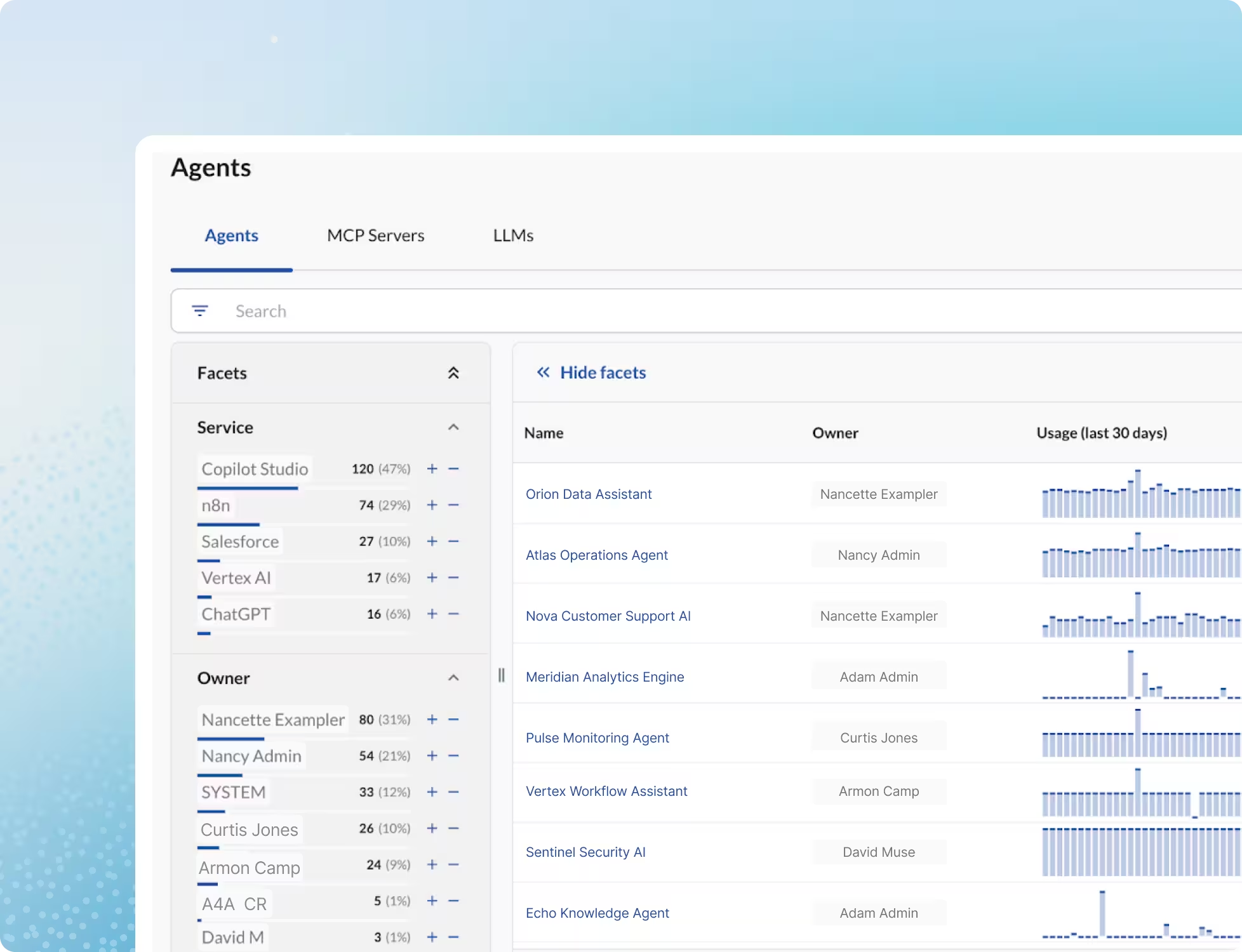

Get a continuous, authoritative view of every AI tool, agent, LLM, and MCP server operating across your environment so security always knows what's running, who owns it, and what it can access.

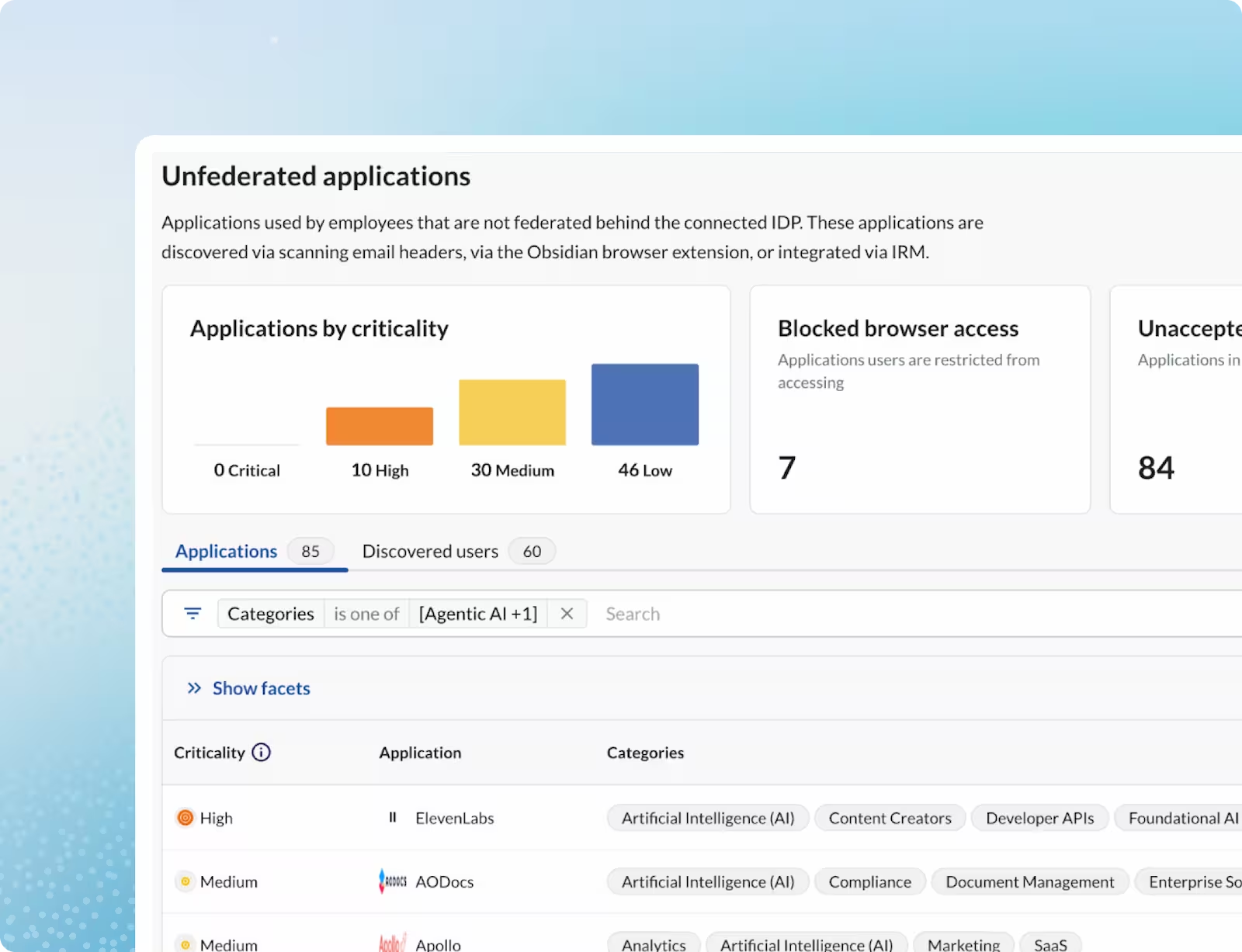

Bring every unsanctioned AI tool and browser extension out of the dark with browser-level discovery that catches what traditional security tools miss before sensitive data is exfiltrated.

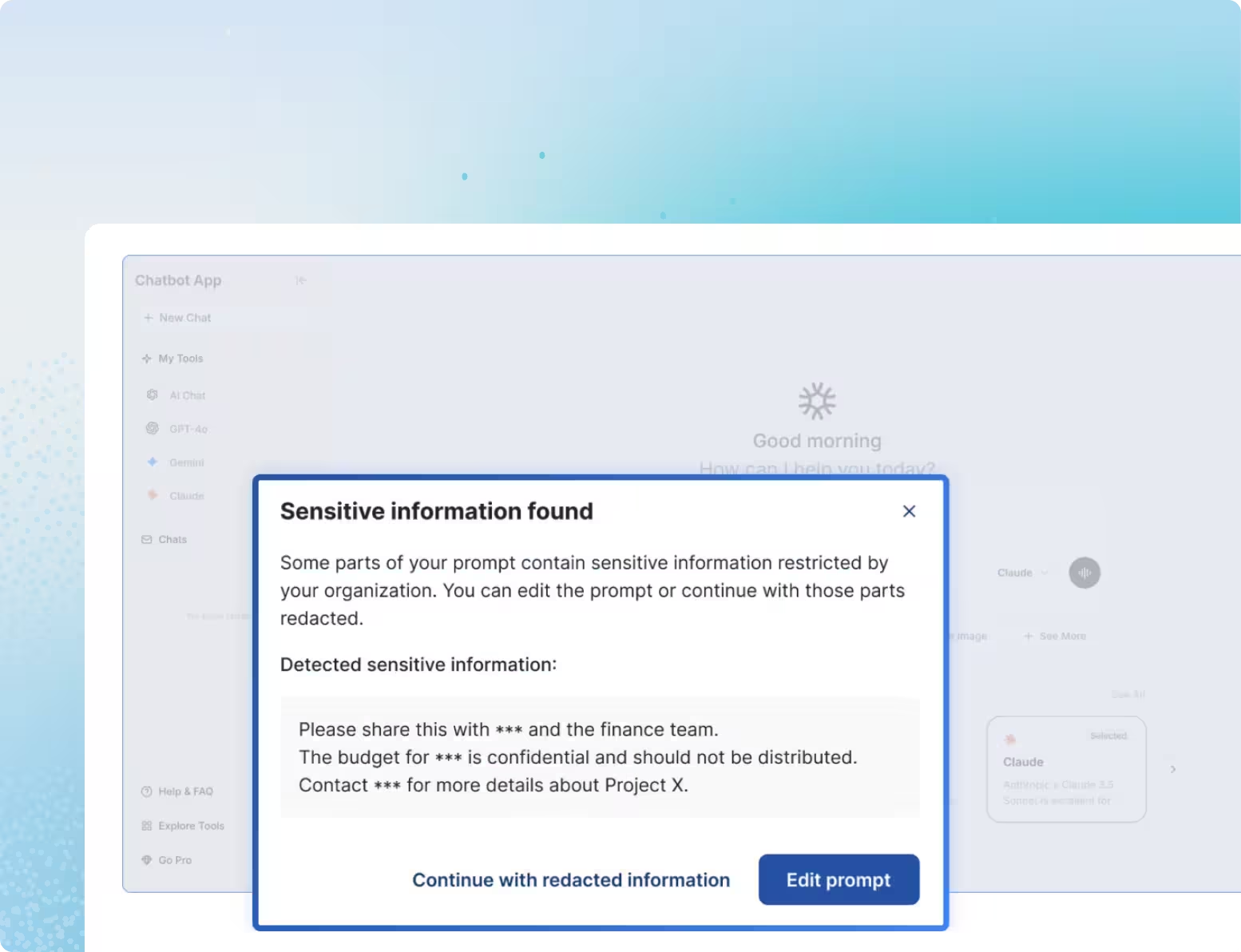

Prevent proprietary data from being uploaded to unnoticed and personal use third-party Gen AI platforms like ChatGPT and Claude by catching and blocking sensitive prompts at the source, before they ever leave the browser.

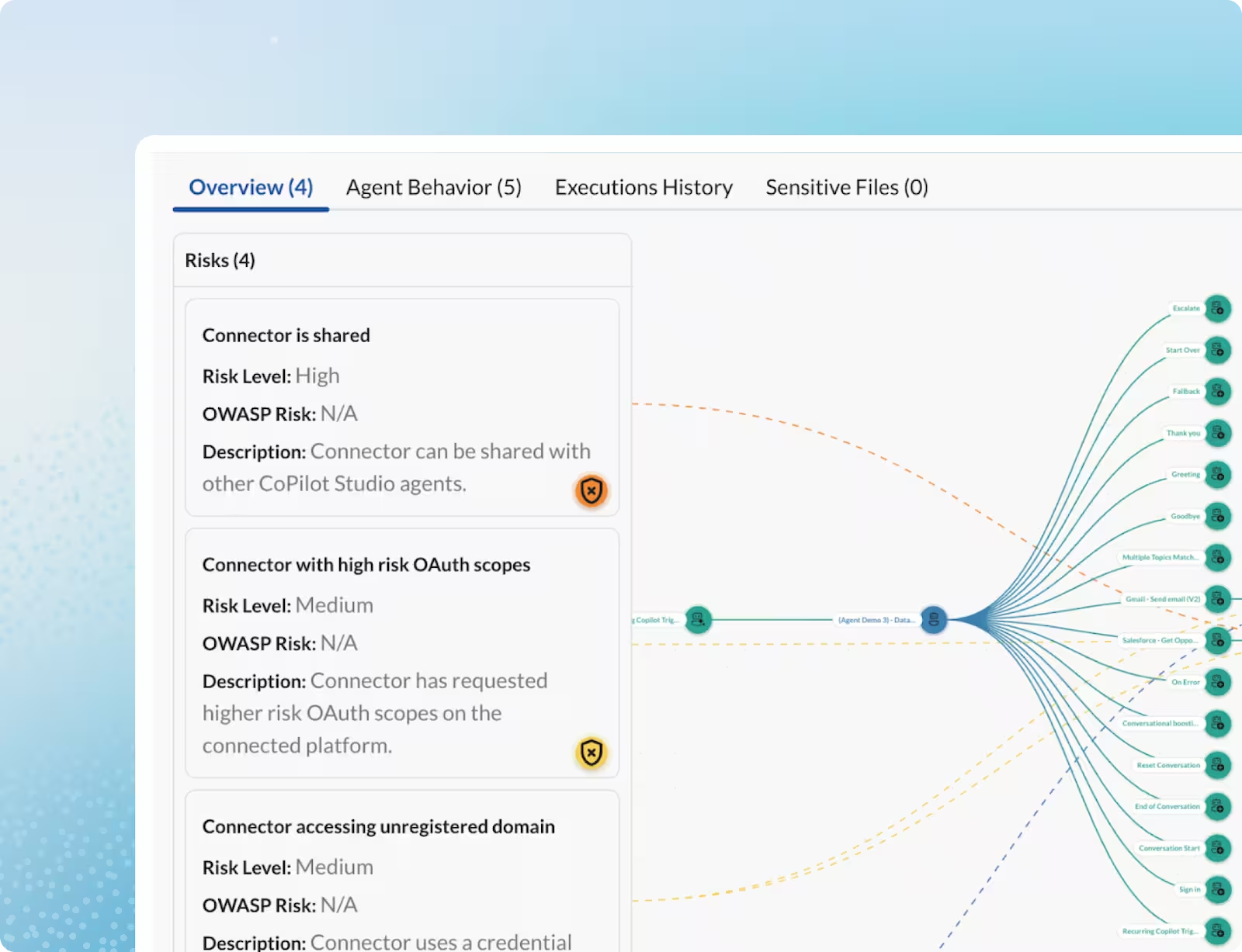

Map every agent's real permissions, trace the tools and MCP servers it invokes, and enforce runtime guardrails that block privilege escalation and excessive data access before they execute.

Enforce least privilege across every AI agent and human identity by continuously assessing and right-sizing the permissions agents actually need versus what they hold.

Detect and block high-risk agent actions at execution time—privilege escalation, excessive data access, and policy violations before they impact the business.

AI security is the practice of governing every dimension of AI risk in your organization: the tools employees use, the data they share through prompts, and the autonomous agents that act on their behalf. Without it, sensitive data leaks through unsanctioned tools, proprietary information enters GenAI prompts undetected, and agents operate with permissions no one intended to grant.

Traditional security tools were built to monitor human activity across known systems. They can't see browser-based AI tool usage, can't intercept what employees type into a GenAI prompt, and can't track agents that operate through OAuth tokens and API keys rather than SSO. AI risk requires a purpose-built layer of visibility and enforcement that legacy tools were never designed to provide.

Shadow AI refers to any AI tool, extension, or GenAI application that employees use without security approval. Obsidian detects it through browser-level discovery that surfaces every tool in use, including personal accounts and unmanaged devices, giving security teams a continuous, accurate inventory.

Model Context Protocol servers are the infrastructure layer that connects AI agents to backend tools and data systems. When an agent invokes an MCP server, it inherits that server's permissions, often including access to systems the invoking user cannot reach directly. Without visibility into MCP usage, organizations have no way to understand the true blast radius of their agent deployments.