Just one prompt can confuse an agent. Stop high-risk actions before they happen with flexible guardrails that fire at runtime.

True runtime security requires signals from both agents and the apps they act inside. Start where you are today, and add enterprise app context when you're ready to stop risks that neither source reveals alone.

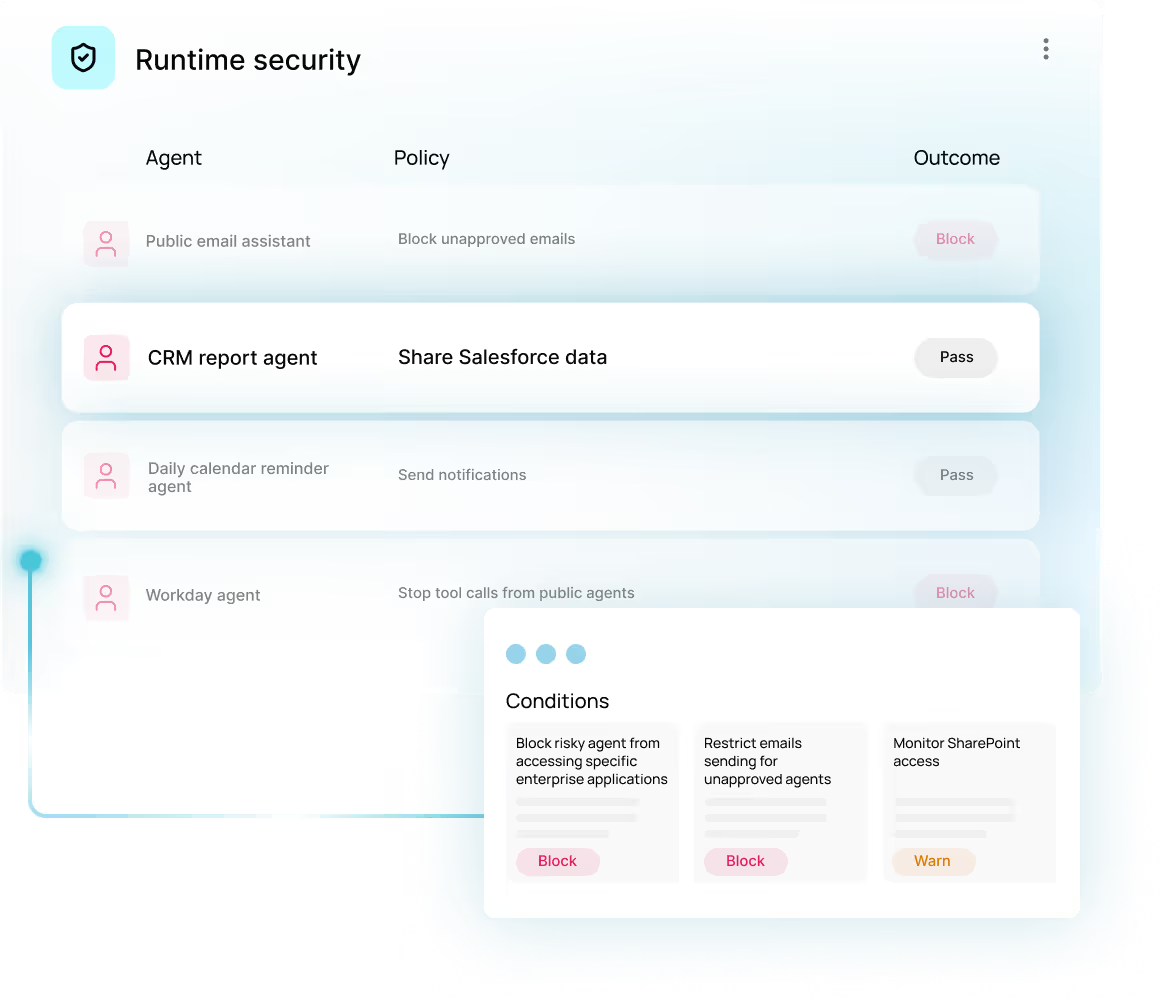

Configuration reviews show what an agent is allowed to do, while runtime security evaluates what it is actually doing at execution time. This helps stop risky actions that are technically permitted but still unsafe or unintended.

The platform blocks high-risk, policy-violating executions before they complete. Examples include unexpected tool calls, suspicious data access spikes, malicious or misinterpreted prompts, and risky action chaining.

Yes. Policies can be set to either track or block executions based on your organization’s risk tolerance.

Every agent action can be evaluated against OWASP-aligned risk factors in real time. Webhooks can then be used to intercept high-risk executions before they complete.

Obsidian has support across platforms including n8n, Agentforce, Vertex, Copilot, Foundry, Bedrock, ChatGPT, Cursor, and Claude.

Agent data alone may miss risks that only become clear when correlated with SaaS and identity telemetry. The combined view helps reveal issues like sensitive data access across apps or privilege-related exposure behind an agent’s activity.

Obsidian continuously inventories agents, users, MCP servers, LLMs, owners, and connected tools in one place. This also includes visibility into AI-specific risk factors such as maker mode, org-wide access, public exposure, and stale or dormant connections.